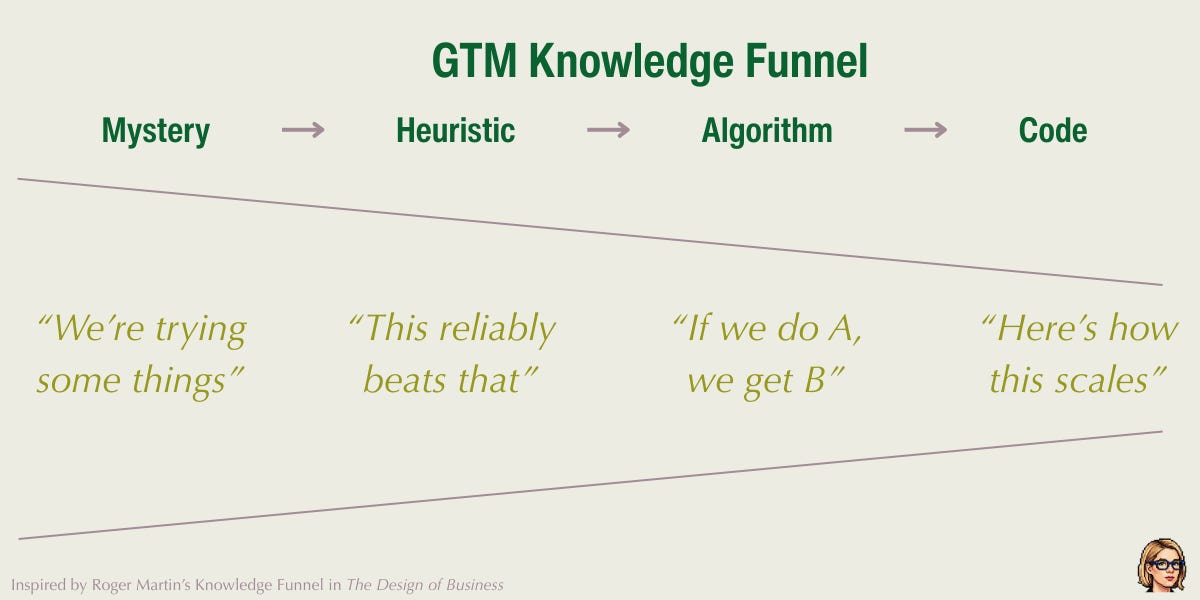

Mystery, Heuristic, Algorithm, Code

A framework for GTM now that the playbooks aren't working

“ChatGPT was recommending us all summer,” the founder said. “If you searched for anything in our space, we were the answer. Pipeline was incredible. It felt natural.”

“What happened?” I asked.

“It stopped. Literally overnight. We went from being the answer to not showing up at all.”

“Do you know why it was recommending you in the first place?”

A long pause. “No. Not really.”

“Any hypotheses?”

“...”

Knowledge grows in predictable ways

There’s a framework from the strategy thinker (and my lifelong mentor) Roger Martin, which I use in most growth workshops I run. Almost nobody in tech has heard of it, which I always find strange, because it broadly explains how knowledge matures in any field. Roger named it the knowledge funnel.

Here’s how I see it applied to GTM specifically:

A mystery is something you don’t understand at all. You don’t know what works. Spaghetti, meet wall.

A heuristic is a rule of thumb. “When we do dinners, a few leads turn into deals.” You kind of know what you’re doing… kinda? Or at least that’s what you tell your board. And yourself. Mostly yourself.

An algorithm is a repeatable system. If I do X, Y happens. Megalomania can set in: you are invincible!! Until you raise the next round and see the new growth targets.

Code is full automation. Just execute. Living might be easy here, but now you sleep with one eye open, wondering when everyone else figures out what you figured out.

Most GTM teams think the goal is to get to code and luxuriate there. But the funnel doesn't actually end at code.

The edge always collapses

I have a photo of me with Billy Beane, the Oakland A’s GM who is the hero of Moneyball. Asana brought him in to keynote a customer event in 2023, and I hung around him like a golden retriever for the afternoon. The CMO, whose personal hero was Britney Spears, thought this was the single funniest thing she’d ever seen.

I love Billy Beane partly because statistics is supercool (obvi), partly because his story is the knowledge funnel in its most ruthless form:

Beane and his sabermetricians turned on-base percentage and team wins from a mystery into an algorithm. And then? Well, it became a verifiable method, a code, so everyone else stole it. Within a decade, all MLB teams had data science teams.

So the edge collapsed, and now baseball’s winning edge is back to the mystery, figuring out the next inefficiency that hasn’t been priced in yet. The cycle repeats, as it always will.

This is what’s happening in the AI era: the playbooks that carried GTM teams for a decade are burning. Even large teams are dropping back into mystery, or trying to decode heuristics for what still works, what doesn’t work, and what’s become actively counterproductive.

Beware the danger zone

In the mystery stage, AI is almost useless. It has no signal to work with, so it either hallucinates a plan or gives you something generic. Clever people spot this issue fast.

The less obvious danger, the one Feynman is warning you against, is in the heuristic zone.

When you have a rough pattern and you hand it to AI, you get back something that looks, walks, and quacks like an algorithm. It has structure! Steps! Confidence! It reads like something a CMO would give you. It’s tempting exactly because the AI polish makes it look soooooo gooooood 🤩

That’s Feynman’s warning: you’re the easiest one to fool… and AI is your willing accomplice.

In my six years at Asana, we built up the enterprise sales team then laid it off, on Three. Separate. Occasions. The first time I was as gung-ho to go enterprise as anyone. The second time I had serious doubts, and was later ashamed that I went along with it again without voicing my concerns.

The third time? I was on the revenue leadership team, and I remember sitting in yet another enterprise surge planning meeting and thinking: I know exactly how this show ends. I’ve already seen it… twice. I just can’t sit through it a third time.

By the third time around, I knew in my bones that Asana was a product-led-growth business. People needed to see and try the product, then teams needed to have enough traction to enable our sales team to sell a larger deployment.

And yet, in this same meeting, one of my peers who had been there for both the previous surges and layoffs proposed cutting back on PLG and rerouting resources to enterprise sales to make a “real go of it.” Again. Or, more accurately: again, again.

“But we’ve already tried this twice and it didn’t work,” I argued. “We need a real reason to believe this time will be different!”

“Why should we have to prove ourselves?” the exec shot across the room. “We should just go for it!”

That’s AI’s failure mode, made human. Someone who skipped the proving phase and just masked it in decisiveness. AI accelerates that move dramatically, and also gives it much better slides.

I see this pattern everywhere now.

A founder I was advising recently had done his Series A announcement. He got almost 2 million Twitter views and thousands of Twitter likes, plus a boatload of pipeline from it. He thought he knew what worked: his slick two-minute launch video. So he wanted to do another one for the Series B! He was going to spend a lot more to make an even better video this time. He was good to go, and showed me the plan with a buncha swagger.

But we paused to dive into the data together. That Twitter video? It had abysmal retention, since 96% of viewers didn’t even make it through the first 20 seconds. But if people barely watched the video… what was driving the pipeline?? Turns out that in the first few seconds, the video had a text card that named the company, the category, and the use case. And between that, the tweet copy, and the wave of retweets and endorsements, people got what they were all about without watching much of the video at all.

It wasn’t just the video, and focusing only on production was doubling down on too narrow a variable, building a GTM strategy on a misdiagnosis.

Always remember: You. Are. The. Easiest. One. To. Fool.

Seeing things as they are

Before you can push a heuristic to an algorithm, you have to build a mental model of what’s going on.

Take each outcome bucket that’s working, and dig one level deeper: what’s driving it? When you actually list the possible contributors, people can usually narrow it down.

“Is it the advertising?” “No, we barely spend.” “Content and SEO?” “We only just started that.” “Dinners?” “Yeah, when we do those we can often track a few opportunities back to each one.” “LinkedIn?” “Yes, we do a ton of that and we know people see it, but we’re not sure how much it’s contributing.”

That’s the move from mystery to heuristic. You went from “it’s black magic” to “I think it’s the dinners and LinkedIn.” You still can’t prove causation, or exact measurement. But you have a hypothesis, and a hypothesis is something you can test, measure, understand, and eventually build into a system.

Sometimes the upstream cause is obvious: if everyone’s talking about you, it’s probably not your banner ads. But often you have to go looking for it.

I do this work in workshops, usually with the founder and GTM leadership, sometimes with the Growth team. I start with a simple question: what are you doing right now? How’s it working? What makes it go well, and what makes it not? Then I probe. I’m trying to map every lever and figure out what stage each one is at.

If something is working and you understand why? Scale it.

A company I worked with discovered that dinners were their highest-converting channel: invite 12 people, see 1-2 conversions. Consistently. That started as a heuristic. They measured the patterns, clustered attendees by geography, automated invite lists with more engagement data. They went from 10 dinners a year to 40, and the pattern held. That’s heuristic becoming algorithm, and it only happened because they were rigorous about understanding what part of the dinner was doing the work.

And sometimes the problem isn’t that you don’t understand the channel, but that you’re inexplicably neglecting it. I had a conversation recently with a Series B founder who also told me dinners were one of his best channels. Great pipeline, strong conversation. I asked when the last one was? October. When’s the next one? April. FIVE MONTHS between activations of his highest-performing channel. Throwing an exec dinner is not planning a wedding. It’s more like planning brunch with some really flaky friends.

If it’s working, do more of it. A lot more.

Why legacy teams struggle to adopt AI & change

Everything I just described sounds straightforward on paper. In practice, it requires people who are comfortable exploring the unknown, and being wrong over and over again on their way to something right.

But as I’ve written before, most GTM teams are built to execute, not explore. And if you have a repeatable process, whether algorithm or code, AI can execute it now with very little human labor needed.

Your team ratio has to flip: fewer executors, more explorers, AI handling the rest. The problem is that you can’t flip your ratio just by retraining the people you have: executors don’t just fail to explore, but use their execution skills to avoid exploring altogether.

A CEO recently brought me in to help a team move faster. The team’s response was to spend several weeks carefully scoping exactly what they needed to move faster, drafting and redrafting proposals that detailed what it would look like to move faster. At the end of their extensive scoping process, they concluded… that they were already moving fast enough and didn’t need any help.

You cannot make a risk-minimizing executor into a risk-taking explorer just by giving them AI. The mindset is the blocker, not the tools.

Here’s what I’ve learned the hard way: people can change, but you can't change people. If you want a team with a new mindset, you hire new people. Different people.

I regret to inform you that the playbook isn’t coming back

I’m genuinely excited about this. There’s a line from Shunryu Suzuki’s Zen Mind, Beginner’s Mind that I come back to constantly: “In the beginner’s mind there are many possibilities, but in the expert’s mind there are few.”

That’s what I loved about the early years at Dropbox: the empty slate.

Nobody handed us a playbook. We were figuring it out: trying things, seeing what worked, arguing about why. There were so many possibilities.

Then people codified what worked, and for a while the playbooks were real and the money was easy.

But something was lost. The world got smaller as the expert’s mind took over.

The core of the challenge is all mystery and heuristic now. That can feel terrifying, especially if you built your career on executing known systems.

But even when you do crack the code, Moneyball ends the same way every time: someone steals the algorithm, the edge collapses, and the cycle starts over.

The people who thrive are the ones who can always live in the land of not-knowing, who can form a hypothesis and be wrong and then form another one, and who don’t need a playbook to know they’re making progress because they can just feel it.

The playbook isn’t coming back. I think that might be fine.

This is the third in a series on AI-era GTM: The Trust Recession | The Imitation Crisis